Something happened in one of my workshops earlier this month that I haven’t stopped thinking about since.

A participant (excitedly) told me he’d uploaded my training materials to Claude, then uploaded his notes from the Get Started with No-BS OKRs workshop that he’d completed and asked Claude to build a skill based on the practices.

He shared what the tool produced, and it was… actually surprisingly good. Reading the simple steps that my practices break down to when interpreted by a machine made me feel pretty good about how accessible I’ve made the act of writing OKRs.

That same week, I was working with a large group of localizers, and their draft OKR quality was off the charts compared to the average. I said so a few times, and they loved the praise. People kept telling me the Get Started workshop was so good, it made it easy to write their OKRs.

The next day, when I tucked in for 1:1s with people the puzzle pieces finally fell into place.

Nearly everyone I spoke with said that they’d taken their notes from the Get Started workshop and dropped them into [insert GPT of choice here], and out popped No-BS OKR drafts.

I mean — of course they did. With corporations adding “thou shalt use AI” clauses to their incentive programs, and the hype we’re seeing literally everywhere… of COURSE people are turning to GPT tools to help them come up with the “right” OKRs.

It’s really sunk in for me the last few weeks what I’ve been hearing from other OKR practitioners for several months now:

GPTs can now write OKRs.

And they can write textbook OKRs — OKRs that align with best practices.

But I’ve always thought: “Yeah… but creating OKRs is just the first step. Implementing OKRs is the hard part.”

And until recently, that’s been true. But when I sat down to write this blog post and actually fact-checked it, it was eye-opening.

Before we get to that, let’s just level set on one thing.

OKR writing was never the hard part.

Since the very beginning, I’ve done everything I can to make creating OKRs as simple as possible. Because the mechanics of creating OKRs isn’t actually the hard part — they’re really, truly, very simple.

What’s hard is what surrounds the mechanics of creating OKRs: articulating the vision of change that informs meaningful OKRs, and then what happens after you’ve written them. People’s mindsets, and human behavior: those are the parts that are truly challenging.

The part that leads to slow adoption — the part that makes DIY implementation so challenging and full of pitfalls, the part that separates OKRs-in-a-document from OKRs-that-change-how-people-work — is what surrounds the OKR writing.

The questions that determine whether your OKRs actually function are behavioral and organizational. They require judgment about your specific situation: your team’s capacity, your org’s maturity with this kind of thinking, its skills with conflict, the dynamics between people and functions, what you’ve already tried, and the baggage (or excitement) that people come into the process with. They can’t be answered by pattern-matching on the leading OKR books, however high-quality that body of work is. I’ve read every OKR book on the market — and none of them can answer these questions for your specific organization.

The hard part is the stuff that only surfaces when real people sit down to do this work.

So I fact-checked this post. GPTs are getting it right.

OK. So practitioners have broken OKR creation down to simple and clear enough steps that GPTs can execute them. But answers to technical questions about OKR implementation are the domain of human experts, right?

Eh… that depends.

Even a year ago, that was true. Models had such poor training that they confidently answered implementation questions with “best practices” that may sound good but fall apart in actual practice.

Now? Even public models without specialized training are able to answer OKR implementation questions technically correctly — at least for the average organization. A year ago, questions like the following (real questions from clients recently) may have yielded a poor answer from the GPTs:

- With finance OKRs, what about payroll? Does it have to have a key result?

- I want to reduce our monitoring errors from 75 million to 8 million. It doesn’t ladder to a company objective. Can I still write a KR for it?

- I have an important outcome I won’t know the result on until December 31st. What do I do in the meantime?

Today, I quibble with their language choices, but I actually can’t fault the substance of their answers to a large degree.

But correct information isn’t the same as helping people change.

If what you’re aiming to do is create OKRs for the sake of giving your organization clear direction and creating alignment on your major measures of success — you’re going to put them on a slide, and present them at your all hands, then get to work and see how you do at achieving them… then there are organizations where a GPT might actually be able to support that entire arc of effort. You’ll probably have a better experience with an expert-trained model than with a general GPT — but the general GPTs seem to have learned from the leaders in the field (myself included) … so even the public general models are pretty good at OKRs at this point.

With that workflow I just described, OKRs are information. They’re words and numbers on a page that serve the same purpose as a destination on a map: to help us understand where we’re headed together. GPTs are (minus hallucinations) fairly good at information.

But GPTs can only work with the information they have.

GPTs can’t observe the body language and listen for subtleties of tone of human communication beyond the words that are captured in a transcript.

GPTs can answer questions and even analyze data based on what you feed into it and how you train it, but in my experience, they’re overzealous at spotting alignment, and without specific training they’re “pleasing machines:” their success metrics are active usage, so they’re built to keep you coming back — which means they suffer from the same challenges some people do around telling the truth if it might be risky to do so.

GPTs answer “how” questions with mechanics — ChatGPT will give you ten tips that aren’t wrong if you ask it how to help your team shift from thinking about activity and tasks to creating key results that are actually measurable outcomes. The first few are some of the same mechanics I teach: clear separation of objectives, key results, and initiatives; lean into “why” and “so what” questions; require baselines and targets.

But at the end of that list, a reader has no more insight about how to actually help people make that shift than before they started. Because making the shift from the “comfort zone” of activity thinking, to the risky unknown of measurable stretch goal setting is only partly an information challenge. It’s also a psychological, social, and behavioral challenge — and at least for now, that’s the domain of human experts, not pleasing machines.

I work with groups as large as 30 (or even sometimes 60) to create, review, and implement OKRs. If a GPT led the workshop, it could give instructions, answer questions based on its training and information, and at the end of the workshop, some of the participants may have draft OKRs.

The majority would not.

Different people enter that room with different definitions of related terms, different conditioned beliefs about goal setting and how “safe” it is to set goals at work, different suspicions about how the OKR process will be used, and different privileges or past trauma around workplace performance and goal setting.

A trained OKR coach reads that room. They notice who’s leaning in and who’s shutting down. They know when to push on precision and when to back off because someone needs to build trust with the process first. You can’t prompt your way to that.

Because those 30 people aren’t an “average organization.” They’re specific humans with specific histories — and helping specific humans change how they work is still human work.

“A pleasing machine will tell each of them what they want to hear. A human expert will tell them what they need to hear — and know the difference, and know when.”

When drafting gets faster, learning gets faster.

Another interesting advancement: When AI speeds up the drafting, it speeds up more than just the drafting.

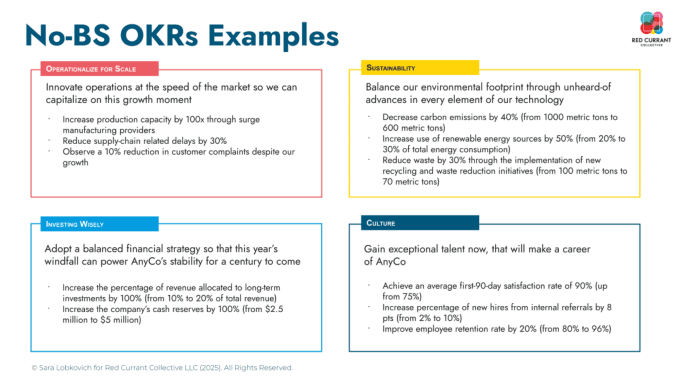

In a first cycle of OKR implementation, one of the biggest shifts most people learn is the difference between activity and results — and how to write truly measurable key results — how to discern when something just needs to get done vs. when it needs a progress or success measure. This is where I’m seeing AI tools genuinely accelerate the work. Where early models couldn’t, the agents I’m training now allow clients to verbally process their thinking about their business — what they dream of being different — while the agent listens and turns that into potential draft key results that follow my No-BS key result formula:

[Increase / decrease / improve] [metric or observable phenomenon] by [change] from [start value] to [finish value]

So the person can stay focused on verbalizing or externalizing the change they dream of creating — their vision of change — and the agent can do what it’s good at: process that language into measurable draft key results.

Agents are great at formulas, and they’re good at listening when they’re well trained. They can’t make the decision for you, but they are better at hearing potential key results in verbal processing than most people are. (That said, that skill can be trained: ask me about No-BS OKR Coach Training if you’d like to hear more.)

I’ve put a lot of effort into making OKR creation simple and well-scaffolded — and people still struggle with the cognitive shift from activity to outcome thinking. Trained agents don’t. They don’t have the cognitive bias toward activity-thinking that humans do. It’s been genuinely surprising to watch.

What the second cycle of OKRs teaches you

During the first cycle of OKR implementation, organizations usually learn two things:

- They had surprises on outcome measures at the end of the term — showing they didn’t have leading indicators everywhere they needed.

- And they usually learn that they would benefit from more cross-functional OKRs — which means first getting people out of their functional silos and aligned on a shared vision of change.

By the second cycle, most organizations are dealing with something harder. Teams often come back to me at the end of Q1 and say: why didn’t you tell us that? Why didn’t we start with cross-functional localized OKRs? And my answer is always the same:

If I tell you it’s important, that’s different than you learning it’s important.

Also: it’s hard enough to learn OKRs (and especially key results) thinking functionally — adding cross-functionality adds a layer of alignment that most teams struggle to execute even when they know they need it, much less when an outside consultant is the one telling them to.

None of that is a writing problem. And none of it gets easier just because the OKR drafts come faster. But those learnings are happening faster. One theory is that when creating functional OKRs feels easier, people are less likely to dread the process of creating OKRs — so they’re more open to the idea that “Hm… you know where these would be really helpful? Cross-functional alignment!” instead of feeling like “OMG we’re finally done writing OKRs, I never want to do that again,” when older and more onerous drafting processes are used.

When we move up the drafting speed, I’m seeing teams get into cross-functional OKR creation right after they lock their first-cycle OKRs, instead of waiting until the end of the first implementation quarter — moving up cross-functional alignment significantly earlier.

This is why the work doesn’t end when the OKRs are written — and why the organizations I work with don’t stick around just because I help them write OKRs. (I mean — some do.) Most come back because I help them work through the judgment calls, the cultural dynamics, the places where the framework meets the messiness of actual organizational life. AI is genuinely useful for accelerating certain parts of OKR creation. But the human behavior part is still the hard part — and for now, that’s still the domain of human intelligence.

If you’re navigating the hard part

If any of this sounds familiar — if you’ve got OKRs now and you’re thinking: “Now what?” — that’s what I do best, and what I help clients with every day.

I’m opening up a limited number of Q&A calls specifically for folks who find me through this blog post. Bring your real “beyond writing OKRs” questions. This isn’t a sales call — it’s a brief working session to get your specific questions answered.